How to Read Statistical Analysis Report in Clinical Trials

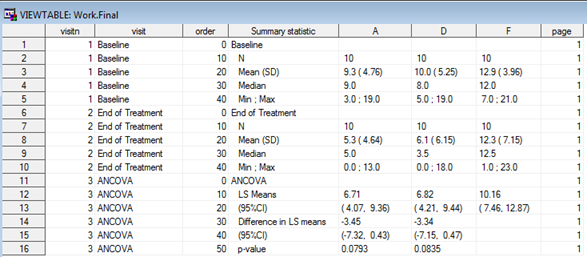

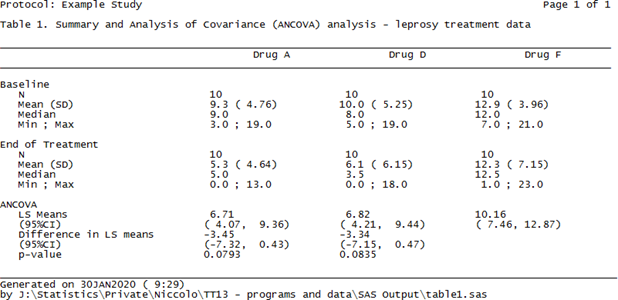

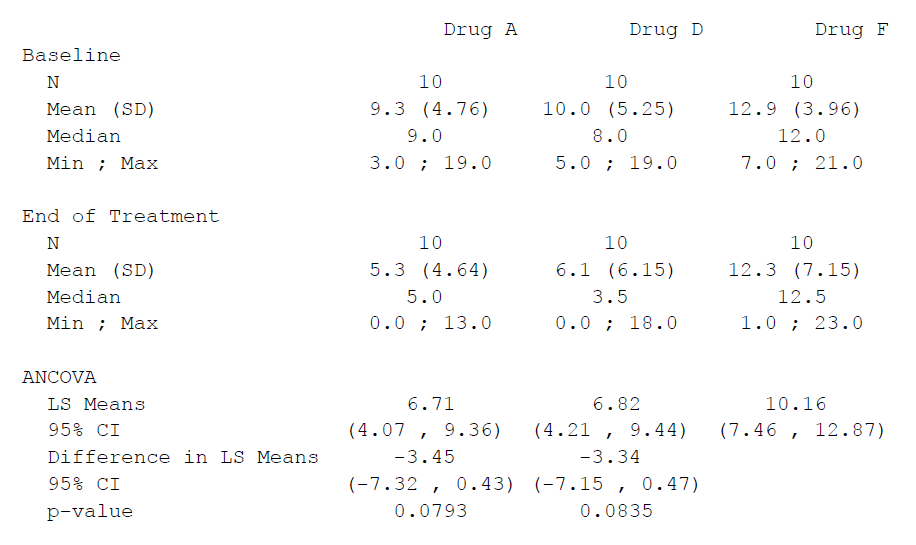

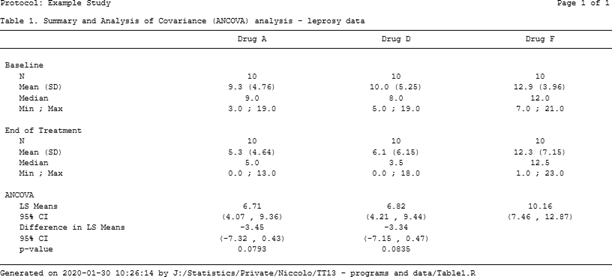

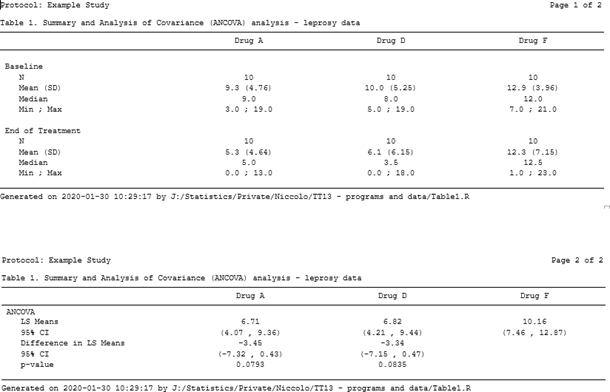

The use of R programming in clinical trials has not been the most popular and obvious, despite its contempo growth over the past few years, its applied use yet seems to be hindered by several factors, sometimes due to misunderstandings, (e.yard validation) but too considering of a lack of knowledge of its capabilities. Despite these bottlenecks, though, R is doubtlessly creating its ain (larger past the day) niche in the pharmaceutical manufacture. In this blog we volition see how R can be used to create TLFs much like the electric current combination of PROC Written report/PROC TABULATE and the ODS currently does, thus showing its power and adequacy to play an important role in our industry in the years to come up, non equally a replacement for, but rather as an alternative option to SAS®. Sentinel our statistical consultant explore the near suitable way of creating TLFs, be it R or SAS in the presentation below: Everyone involved in any capacity in clinical trials reporting is aware of a sort-of self-proclaimed truth that can be broadly summarized every bit 'Thou shall not have any software other than SAS'. Whilst this has been practically true for quite some fourth dimension, somehow based on the misconception that regulators 'strictly' requested submissions to be made using SAS, other players have started to make their appearance in the pharmaceutical manufacture arena and depict attending of statistical programmers and statisticians akin. The most famous of these is most certainly the open software R, which has a long runway record of usage in academia, both for practical and theoretical statistical research, and has been used to date besides in the pharma industry, though its adoption in submissions has been hindered by the above described misconception. Setting aside the validation part of the flow (which is a electric current hot area of word when it comes to R but volition not be discussed here), moving from SAS to R for such a consolidated task as Tables, Listings and Figures (TLFs) creation does require some re-thinking. How the actual reporting works is a challenge as there is not a direct PROC REPORT counterpart that can exist used straight away to export results to an output file, but that is the only real claiming. Since in most cases the efficiency with which a software can perform a task heavily depends on the ability of the cease-user to exploit all of its features, using R efficiently (the aforementioned way we've been doing with SAS for quite a while now) simply implies getting used to it and getting to know all the 'arrows in its quiver' bachelor to attain a goal. In this blog we volition first run into how a specific TLF (including both summary and analysis sections) is unremarkably generated in SAS and then we'll see how the same table can be created and reported using R. No thing the software nosotros use, i.e. SAS or R, to create a tabular array or a listing (and to some extent also a figure) we'll have to manipulate a source dataset (or more than one) to create a dataset/data frame that to a certain extent mirrors a given table specification, and so feed this dataset to a procedure (in SAS) or function (in R) that 'translates' the dataset into a neatly formatted table. Still, whilst this workflow appears rather slim, there's a diverseness of decisional branches that demand to be considered before choosing how to practically implement it. One key matter to make up one's mind is the actual format of the output table or listing: in many cases tables are created every bit evidently text documents (.txt) which are then collated to a Give-and-take or PDF certificate using tools that are often external to the statistical software (east.g. VBA macros, UNIX scripts), only other feasible options include either creating tables as Rich Text Format (.rtf) or .doc/.docx files to read into Microsoft Discussion straight away (and as well in this example collation for reporting purposes is washed outside of the software) as well equally Portable Document Format (PDF) or html files. For the sake of illustration, we volition use information from a report on leprosy handling described in Snedecor and Cochrane seminal Statistical Methods book [1]. The information has been arranged as a 3-columns dataset: Drug, Baseline and End of Handling measurement (similar to a very simple CDISC ADaM BDS dataset, without subject ID as it's not relevant for the case). The goal is to produce a table so that for each of the iii treatment arms, basic summary statistics are displayed every bit well every bit a section with a statistical analysis that compares drugs A and D to drug F (the control arm). If we wanted to create this kind of tabular array using SAS we'd most likely utilize a combination of PROC MEANS/PROC SUMMARY/PROC UNIVARIATE for the first ii sections (depending on our preferences or existing visitor-specific standard macros) and east.m. PROC GENMOD/PROC MIXED for the analysis department (again, depending on our preferences, though in this uncomplicated example in that location's no clear advantage in using one vs the other) coupled with some information wrangling to put results together and have them in a dataset ready to feed into PROC Study, as in Figure 1 beneath. Figure one. SAS dataset to create ANCOVA tabular array Whichever the output destination, the cadre PROC REPORT specifications will exist pretty much the same, the primary difference being the demand to add together some style commands when eastward.1000. outputting to RTF to ensure the specifications are followed. The syntax for a obviously text file here would look as follows: title1 j = l "Protocol: Instance Study" j = r "Page 1 of ane"; title2 j = fifty " "; title3 "Table ane. Assay of Covariance (ANCOVA) - leprosy treatment data" j = l; proc report information = terminal headskip headline formchar( 2 )='_' spacing = 0 nowd eye; cavalcade (" " page visitn order _label_ A D F); define page / noprint order; define visitn / noprint club; define order / noprint order; define _label_ / "" display width = 33 flow; define A / "Drug A" display width = 22 center flow; define D / "Drug D" display width = 22 heart flow; define F / "Drug F" display width = 22 center flow; pause after visitn / skip; compute after folio; line @ ane "&line."; line @ 1 "Generated on %sysfunc(date(),date9.) (%sysfunc(time(),time5.))"; line @ ane "past &dir.table1.sas"; endcomp; run ; The overnice affair about the in a higher place commands is that the input dataset matches the shells only partially and full adherence can be obtained by using the plethora of built-in options that PROC REPORT features. Finally, in gild to have the to a higher place sent to a .txt file we tin can wrap the PROC Report call between two PROC PRINTTO calls (the first starting printing to an output file, the second resuming printing to SAS listing), whereas for a .rtf file we could utilize the ODS RTF command (syntax skipped hither), and for a .doc/.docx Word file the almost recent addition ODS Word (though the author hasn't had the chance to apply it yet). Figure 2 shows the resulting .txt file using the above PROC Report syntax. Figure 2. A .txt. table created using PROC Written report A criticism often directed at R, in particular from die-hard SAS users, is that it's too dispersed, with likewise many packages that quite often practice the same things merely provide results in a somehow inconsistent and incoherent manner. This tin can in fact be a great reward when it comes to manipulating data frames to summate simple summary statistics as well every bit fitting complex statistical models. Before moving onto the reporting chip, we will meet how the summaries and ANCOVA section of the tabular array tin can be apace created using R to illustrate how this flexibility tin can exist leveraged to achieve our purposes. The summary statistics sections of the table are usually created in SAS past passing the source dataset to one of PROC MEANS, PROC SUMMARY or PROC UNVARIATE and the resulting dataset has to exist re-formatted for our purposes. In society to practice the same in R we tin either manually write a function that calculates each summary and and then adjust it, using e.g. tapply or by: mean01 <- by(ancova, ancova$Drug, function(ten){ means <- colMeans(ten[,ii:3]) }) mean02 <- every bit.data.frame(t(sapply(mean01, I))) Note in the higher up the 2nd and third column in the data are the baseline and post treatment values, respectively. Also we can re-arrange the source data in long-format (i.e. adding numeric (VISITN) and character (VISIT) variables identifying the time-bespeak being summarized and so rely on ane of the many wrapper functions such as ddply from the plyr package to calculate everything for us and put it in a nicely arranged dataset (similar to a PROC MEANS output): descr01 <- ddply(ancova02, .(visitn, visit, Drug), summarize, due north = format(length(Drug), nsmall = 0), mean = format(round(mean(aval), 1), nsmall = 1), sd = format(round(sd(aval), two), nsmall = two), median = format(round(median(aval), 1), nsmall = 1), min = format(round(min(aval), ane), nsmall = 1), max = format(round(max(aval), 1), nsmall = 1)) This latter choice was chosen here for information technology returns a readily usable data frame with already formatted summary statistics that then needs to undergo the usual post-processing steps to obtain an object that mimics the table vanquish. By using some functionalities of the tidyr packet this can be easily achieved with no more programming efforts than it would using SAS. The analysis section office can then be programmed in its core contents in a very compact fashion: model <- lm(PostTreatment ~ Drug + PreTreatment, information = ancova) lsmeans <- emmeans(model, specs = trt.vs.ctrlk ~ Drug, adjust = "none") lsm_ci <- confint(lsmeans) The first line fits a linear model to the data; the second creates a list that contains ii elements, the Least Squares (or Estimated Marginal, in the emmeans package) Ways for each level of the factor 'Drug' aslope their 95% CI and their difference using a control reference class (in this case drug F); the third line is needed to create an object identical to the one created above that however stores the 95% CI for the differences instead of the p-value testing the hypothesis that the differences are equal to 0 (since the shell requires both). Once this section is post- processed using whichever combination of SQL-like language using sqldf or base of operations R functionalities, we cease up having the post-obit data frame: Creating this dataset took more lines of codes with SAS compared with R after excluding comments and bare lines equally well as 'infrastructure' lawmaking (i.e. setting of libnames and options for SAS and loading of required libraries for R), and using a consistent formatting mode (although R functions lend themselves more to firmness, i.e. one line of code for a function, much more than what SAS procedure statements need for ease of reading). Whilst this per se does non necessarily mean that R is more efficient than SAS (runtime on a large dataset or a more than complicated table would be a much ameliorate indicator), it suggests that R offers an elegant and meaty way of summarizing and analyzing data which is perfectly comparable to what SAS offers (and this is past no means a small matter!). Notably, the start departure compared to SAS is that here nosotros oasis't included the sorting variables VISIT/VISITN/ORD that were available in the SAS final dataset, the reason beingness that since they're not going to be in the printed study there is no indicate in having them in (since it would exist redundant including them only to take a selection that filters them out). Linked to this, the white lines between each department accept been effectively added in as empty rows to mimic the table trounce, something that in SAS we accomplished via the SKIP option in a Break BEFORE command. The full general 'take-home' message is that the data frame to written report needs to look exactly like the resulting tabular array, and that includes eventual blank lines, guild of the rows, 'grouping' variables (e.k. discipline ID in listings where simply the first occurrence row-wise is populated and the other are blanks). At this point, having created the dataset in a rather simple manner, is information technology as uncomplicated to consign it for reporting? As mentioned in the Introduction, there'southward no R version of PROC Study however in that location are tools and functions that permit this task to exist accomplished using a comparable amount of programming equally SAS with comparable quality. Whilst there are many options to reach this, not all of them are as seamless to use as PROC REPORT and the resulting output is sometimes non fully fit for purpose. The combination of the officer and flextable packages, however, provides the user with a rich multifariousness of functionalities to create squeamish-looking tables in Discussion format in a flexible manner. The first step is to convert the data frame to report into an object of class flextable using the flextable office and and so apply all styling and formatting functions that are needed to match the required layout. 1 useful feature of this bundle is that it allows the use of the 'pipe' %>%, a very popular operator that comes with the magrittr package and allows a neater programming past concatenating several operations on the aforementioned object. This translates in the below syntax: summary_ft <- flextable(summary05) %>% width(j = 2:4, width = 2) %>% width(j = i, width = 2.v) %>% height(peak = 0.15, part = "body") %>% align(j = ii:four, marshal = "center", office = "all") %>% align(j = i, align = "left", role = "all") %>% add_header_lines(values = paste("Table ane. Summary and Assay of Covariance (ANCOVA) analysis - leprosy data", strrep(" ", 30), sep = "")) %>% add_header_lines(values = paste("Protocol: Example study", strrep(" ", 93), "Page i of ane", sep = "")) %>% add_footer_lines(values = paste("Generated on ", Sys.time(), " by ", work, "Table1.R", sep = "")) %>% hline_bottom(edge = fp_border(color = "black"), part = "body") %>% hline_bottom(border = fp_border(color = "black"), part = "header") %>% border_inner_h(edge = fp_border("black"), part = "header") %>% hline(i = 1, border = fp_border("white"), function = "header") %>% font(font = "CourierNewPSMT", function = "all") %>% fontsize(size = 8, part = "all") One groovy reward of many functions inside flextable is that they tin be applied only to a subset of rows and/or columns by using standard R indexes, as we have washed above to specify different column widths and alignments. The actual reporting to a Word document is washed using the read_docx and body_add_flextable functions from the officer bundle: the first one opens a connection to a reference certificate (that in our case has been gear up to have a mural orientation, every bit common for TLFs) and the second adds the flextable object created above to the document. The actual reporting is and so done using the standard print function: doc <- read_docx("base.docx") %>% body_add_flextable(value = summary_ft) print(dr., target = "Table1.docx") The resulting output is displayed in Figure 3 below. Figure 3. A .docx table file created using flextable and officeholder In the above program there are a few things worth mentioning before we practise an bodily compare with SAS: As introduced at the beginning of this blog, the reporting of Tables using R is a conceptually different process compared to SAS, however nosotros tin meet that the key options that be in PROC Study too exist in the flextable package, as mentioned in Table 1. There are other features (e.m. the possibility to change font, font size, etc.) that can be mapped between the ii software, simply those mentioned below cover the most important ones. Table 1. Key reporting features – comparison between PROC REPORT and flextable Telescopic PROC REPORT pick/statement FLEXTABLE function Aligning columns center/left/right options in the define argument align office Width of columns width option in the ascertain argument width part Line above column names ' ' in the column statement (see proc report on page ii) border_inner_h function Line beneath cavalcade names headline option in proc report argument hline_bottom role Line at the bottom of the tabular array compute later _page_/<folio- variable> cake Add footnotes/titles footnoten/titlen statement /line statement in a compute afterward block (footnotes but) add_header_lines (titles) add_footer_lines (footnotes) Multicolumn header Embed columns in brackets: ("header" col1 col2) add_header_row using the colwidths option Skip lines break afterward <var> / skip none Page break intermission after <var> / page body_add_break (from the officer packet) 1 specific mention is needed for the concluding ii rows hither, i.east. the chance to 'suspension' the table in different ways, either past adding blank lines between rows or by moving to some other folio. Fixing the commencement outcome in R is relatively easy, every bit nosotros mentioned earlier, whereas for the second one a different approach needs to be taken that involves writing a general function that iteratively prints pages to the reference Word document and so adds page breaks. To do this we first need to create a variable page (every bit we'd unremarkably do in SAS if we wanted to avoid odd folio breaks), and then the role could await as follows: folio.break <- office(data, pagevar = "folio"){ ## Identify which variable stores the page number and how many pages npage <- 1:max(information[,pagevar]) which.pg <- which(names(data) == pagevar) ## Define the flextable object separately for each page for (i in npage) { ## Create the Page ten of y object for the title and identify which rows to display based on folio number npg <- paste("Folio", i, "of", max(npage), sep = " ") rows <- which(data[,which.pg] == i) #flextable object definition (use the npg object to ensure the right Page x of y header line is added ## Print the object out: if it is the start one add to base.docx otherwise add to the document with previous pages printed if (i == 1) { d oc <- read_docx("base.docx") %>% body_add_flextable(value = summary_ft) } else { doctor <- read_docx("Table1.docx") %>% body_add_break() %>% body_add_flextable(value = summary_ft) fileout <- "Table1.docx" print(doc, target = fileout) } } } One time a page variable is added to the reporting dataset to take the ANCOVA section displayed on a separate folio and the above role is applied, the result is the one displayed in Figure 4 (the actual spaces between tables have been reduced to fit this weblog). Figure 4. Multipage table using flextable and officeholder The only drawback with the above is that information technology implies having a page variable that correctly splits rows such that when printing there'due south no overflow to the next page, although this might reasonably exist an issue only with long tables such equally Agin Events or listings). In general, anyway, the two packages combined offer a great caste of flexibility that allows one to reproduce a table as with SAS using a somehow more than explicit report definition compared to PROC Written report, and the bodily amount of programming required is like to SAS. In the introduction we clearly stated that moving from SAS to R for TLFs implies some rethinking of the manner we approach clinical trial reporting. Whilst this is indeed true, as the previous section has shown, information technology is important to understand that this re-thinking is in fact merely minimal and somehow superficial: we'll still be creating output datasets that other programmers will try to match on (using either all.equal or compare_df) and and then exporting this to a suitable document outside of the statistical software. This being the case, the only question worth asking is 'Why?' In the author's view in that location are many answers to this question: At that place are certainly some cases where attempting to recreate SAS results using R and vice versa has generated more than ane migraine, just in most cases this occurs because the assumptions and default implementations differ (e.k. the optimization method for a given regression) rather than because of existent differences. Many of these accept been documented on the internet in mailing lists and help pages, and no doubt that the more than the manufacture starts making more consistent utilize of R more scenarios will sally, thus prompting improve knowledge of both software. The uptake of R equally software of selection within pharmaceutical companies and Contract Research Organizations (CROs) is something that many doubted to see during their lifespan, withal things are rapidly moving even in the pharmaceutical industry. Notwithstanding, many misconceptions about R still exist, non least whether information technology's a tool suitable for creating submission-set deliverables such every bit TLFs. In this blog we take seen that R can be an extremely powerful tool to create Tables and Listings using the officer and flextable packages and tools already bachelor (and for great figures ggplot2 is bachelor), and that by leveraging its high flexibility it is possible to obtain high- quality results with comparable efficiency and quality to standard SAS code. All in all, the current R vs SAS debate is not fair: SAS has been used in the industry for decades now and its pros and cons are our staff of life and butter now, just the aforementioned can't be said almost R which is often met with prejudice due to its open-source nature. Hopefully the above case and considerations have provided a different angle to this quarrel and why information technology does non need to exist a quarrel at all. Plow your validated trial information into interpretable information ready for biostatistical analysis. Using R Programming or SAS, Quanticate tin help you ameliorate understand the effect of your investigational production and it'southward condom and efficacy against your trial hypothesis with the creation of analysis datasets and production of TLFs. Submit a RFI and fellow member of our Concern Evolution squad will be in touch with yous shortly. [i] Snedecor GW, Cochran WG. Statistical Methods, sixth edition. Ames: Iowa Land University Press Overview of the R packages used in this web log. Package Clarification dplyr A fast, consequent tool for working with data frame similar objects, both in memory and out of memory. plyr A set of tools that solves a common ready of problems: you demand to break a big problem down into manageable pieces, operate on each slice and then put all the pieces back together. For example, you might want to fit a model to each spatial location or time indicate in your study, summarise data by panels or collapse loftier-dimensional arrays to simpler summary statistics. tidyr Tools to assist to create tidy data, where each column is a variable, each row is an observation, and each jail cell contains a single value. 'tidyr' contains tools for changing the shape (pivoting) and hierarchy (nesting and 'unnesting') of a dataset, turning securely nested lists into rectangular data frames ('rectangling'), and extracting values out of string columns. Information technology also includes tools for working with missing values (both implicit and explicit). emmeans Obtain estimated marginal means (EMMs) for many linear, generalized linear, and mixed models. Compute contrasts or linear functions of EMMs, trends, and comparisons of slopes. Plots and compact letter displays. sqldf The sqldf() office is typically passed a single statement which is an SQL select statement where the table names are ordinary R data frame names. sqldf() transparently sets up a database, imports the data frames into that database, performs the SQL select or other statement and returns the result using a heuristic to determine which form to assign to each column of the returned information frame. flextable Create pretty tables for 'HTML', 'Microsoft Word' and 'Microsoft PowerPoint' documents. Functions are provided to let users create tables, modify and format their content. Information technology extends parcel 'officer' that does not contain whatever feature for customized tabular reporting and can be used within R markdown documents officer Access and manipulate 'Microsoft Word' and 'Microsoft PowerPoint' documents from R. The packet focuses on tabular and graphical reporting from R; it also provides two functions that permit users get document content into information objects. A ready of functions lets add and remove images, tables and paragraphs of text in new or existing documents. When working with 'Word' documents, a cursor can be used to help insert or delete content at a specific location in the document. The package does not require any installation of Microsoft products to exist able to write Microsoft files. magrittr Provides a mechanism for chaining commands with a new frontward-piping operator, %>%. This operator will forward a value, or the result of an expression, into the next office call/expression.

R versus SAS for TLFs

Generating an Analysis Tabular array using SAS

Generating an Analysis Table using R

fileout <- "Table1.docx" print(md, target = fileout)

Can R Rival SAS in TLF Creation?

Conclusion

References

Appendix

https://cran.r-project.org/

Source: https://www.quanticate.com/blog/r-programming-in-clinical-trials

0 Response to "How to Read Statistical Analysis Report in Clinical Trials"

Post a Comment